Zipf's law

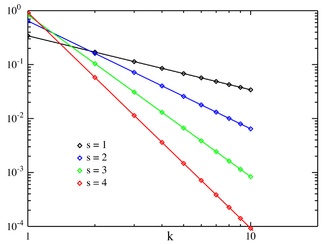

Probability mass function Zipf PMF for N = 10 on a log-log scale. The horizontal axis is the index k . (Note that the function is only defined at integer values of k. The connecting lines do not indicate continuity.) |

|

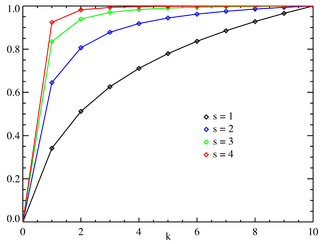

Cumulative distribution function Zipf CMF for N = 10. The horizontal axis is the index k . (Note that the function is only defined at integer values of k. The connecting lines do not indicate continuity.) |

|

| parameters: |  (real) (real) (integer) (integer) |

|---|---|

| support: |  |

| pmf: |  |

| cdf: |  |

| mean: |  |

| median: | |

| mode: |  |

| variance: | |

| skewness: | |

| ex.kurtosis: | |

| entropy: |  |

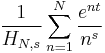

| mgf: |  |

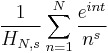

| cf: |  |

Zipf's law, an empirical law formulated using mathematical statistics, refers to the fact that many types of data studied in the physical and social sciences can be approximated with a Zipfian distribution, one of a family of related discrete power law probability distributions. The law is named after the linguist George Kingsley Zipf (pronounced /ˈzɪf/) who first proposed it (Zipf 1935, 1949), though J.B. Estoup appears to have noticed the regularity before Zipf.[1]

Contents |

Motivation

Zipf's law states that given some corpus of natural language utterances, the frequency of any word is inversely proportional to its rank in the frequency table. Thus the most frequent word will occur approximately twice as often as the second most frequent word, which occurs twice as often as the fourth most frequent word, etc. For example, in the Brown Corpus "The" definitive article is the most frequently occurring word, and by itself accounts for nearly 7% of all word occurrences (69,971 out of slightly over 1 million). True to Zipf's Law, the second-place word "of" accounts for slightly over 3.5% of words (36,411 occurrences), followed by "and" (28,852). Only 135 vocabulary items are needed to account for half the Brown Corpus.

The same relationship occurs in many other rankings, unrelated to language, such as the population ranks of cities in various countries, corporation sizes, income rankings, etc. The appearance of the distribution in rankings of cities by population was first noticed by Felix Auerbach in 1913.[2] Empirically a data set can be tested to see if Zipf's law applies by running the regression log R = a - b log n where R is the rank of the datum, n is its value and a and b are constants. For Zipf's law to apply the constant b = 1. When this regression is applied to cities a better fit has been found with b = 1.07.

Theoretical review

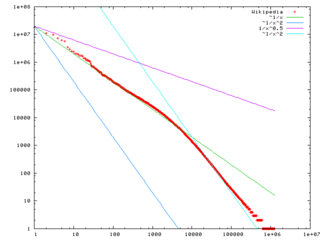

Zipf's law is most easily observed by plotting the data on a log-log graph, with the axes being log(rank order) and log(frequency). For example, the word "the" (as described above) would appear at x = log(1), y = log(69971). The data conform to Zipf's law to the extent that the plot is linear.

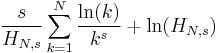

Formally, let:

- N be the number of elements;

- k be their rank;

- s be the value of the exponent characterizing the distribution.

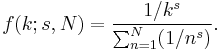

Zipf's law then predicts that out of a population of N elements, the frequency of elements of rank k, f(k;s,N), is:

Zipf's law holds if the number of occurrences of each element are independent and identically distributed random variables with power law distribution  [3]

[3]

In the example of the frequency of words in the English language, N is the number of words in the English language and, if we use the classic version of Zipf's law, the exponent s is 1. f(k; s,N) will then be the fraction of the time the kth most common word occurs.

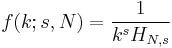

The law may also be written:

where HN,s is the Nth generalized harmonic number.

The simplest case of Zipf's law is a "1⁄f function". Given a set of Zipfian distributed frequencies, sorted from most common to least common, the second most common frequency will occur ½ as often as the first. The third most common frequency will occur ⅓ as often as the first. The nth most common frequency will occur 1⁄n as often as the first. However, this cannot hold exactly, because items must occur an integer number of times; there cannot be 2.5 occurrences of a word. Nevertheless, over fairly wide ranges, and to a fairly good approximation, many natural phenomena obey Zipf's law.

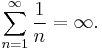

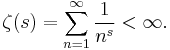

Mathematically, it is impossible for the classic version of Zipf's law to hold exactly if there are infinitely many words in a language, since the sum of all relative frequencies in the denominator above is equal to the harmonic series and therefore:

In English, the word frequencies have a very heavy-tailed distribution, and can therefore be modeled reasonably well by a Zipf distribution with an s close to 1.

As long as the exponent s exceeds 1, it is possible for such a law to hold with infinitely many words, since if s > 1 then

where ζ is Riemann's zeta function.

Statistical explanation

It is not known why Zipf's law holds for most languages.[4] However, it may be partially explained by the statistical analysis of randomly-generated texts. Wentian Li has shown that if you create a document where each character is chosen randomly from a uniform distribution of all letters (plus a space character), then the "words" in this document also follow the general trend of Zipf's law (appearing approximately linear on log-log plot).[5] This suggests a partial explanation for why Zipf's law might hold for most natural languages (although the law holds much more strongly to natural language than to random texts).

An alternate explanation is that Zipf's Law arises necessarily from features of natural language. It is proposed that this occurs because neither speakers nor hearers using a given language want to work any harder than necessary to reach understanding, and the process that results in approximately equal distribution of effort leads to the observed Zipf distribution.[6]

Related laws

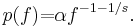

Zipf's law now refers more generally to frequency distributions of "rank data," in which the relative frequency of the nth-ranked item is given by the Zeta distribution, 1/(nsζ(s)), where the parameter s > 1 indexes the members of this family of probability distributions. Indeed, Zipf's law is sometimes synonymous with "zeta distribution," since probability distributions are sometimes called "laws". This distribution is sometimes called the Zipfian or Yule distribution.

A generalization of Zipf's law is the Zipf–Mandelbrot law, proposed by Benoît Mandelbrot, whose frequencies are:

The "constant" is the reciprocal of the Hurwitz zeta function evaluated at s.

Zipfian distributions can be obtained from Pareto distributions by an exchange of variables.[7]

The tail frequencies of the Yule–Simon distribution are approximately

for any choice of ρ > 0.

If the natural log of some data are normally distributed, the data follow the log-normal distribution. This distribution is useful when random influences have an effect that is multiplicative rather than additive.

In the parabolic fractal distribution, the logarithm of the frequency is a quadratic polynomial of the logarithm of the rank. This can markedly improve the fit over a simple power-law relationship.[7] Like fractal dimension, it is possible to calculate Zipf dimension, which is a useful parameter in the analysis of texts.[8]

It has been argued that Benford's law is a special case of Zipf's law.[7] This special connection between these two laws can be explained by the fact that they both originate from the same scale invariant functional relation from statistical physics and critical phenomena.[9]

See also

- Benford's law

- Bradford's law

- Demographic gravitation

- Heaps' law

- Hapax legomenon

- Jean-Baptiste Estoup

- Lorenz curve

- Lotka's law

- Moore's law

- Pareto distribution

- Pareto principle aka the "80-20 rule"

- Power law

- Principle of least effort

- Rank-size distribution

- Zipf–Mandelbrot law

References

- ↑ Christopher D. Manning, Hinrich Schütze Foundations of Statistical Natural Language Processing, MIT Press (1999), ISBN 978-0262133609, p. 24

- ↑ Auerbach F. (1913) Das Gesetz der Bevölkerungskonzentration. Petermann’s Geographische Mitteilungen 59, 74–76

- ↑ Adamic, Lada A."Zipf, Power-laws, and Pareto - a ranking tutorial"

- ↑ Léon Brillouin, La science et la théorie de l'information, 1959, réédité en 1988, traduction anglaise rééditée en 2004

- ↑ Wentian Li (1992). "Random Texts Exhibit Zipf's-Law-Like Word Frequency Distribution". IEEE Transactions on Information Theory 38 (6): 1842–1845. doi:10.1109/18.165464. http://www.nslij-genetics.org/wli/pub/ieee92_pre.pdf.

- ↑ Ramon Ferrer i Cancho and Ricard V. Sole (2003). "Least effort and the origins of scaling in human language". Proceedings of the National Academy of Sciences of the United States of America 100 (3): 788–791. doi:10.1073/pnas.0335980100. PMID 12540826. PMC 298679. http://www.pnas.org/content/100/3/788.abstract?sid=cc7fae18-87c9-4b67-863a-4195bb47c1d1.

- ↑ 7.0 7.1 7.2 Johan Gerard van der Galien (2003-11-08). "Factorial randomness: the Laws of Benford and Zipf with respect to the first digit distribution of the factor sequence from the natural numbers". http://home.zonnet.nl/galien8/factor/factor.html.

- ↑ Ali Eftekhari (2006) Fractal geometry of texts. Journal of Quantitative Linguistic 13(2-3): 177 – 193.

- ↑ L. Pietronero, E. Tosatti, V. Tosatti, A. Vespignani (2001) Explaining the uneven distribution of numbers in nature: The laws of Benford and Zipf. Physica A 293: 297 – 304.

Further reading

Primary:

- George K. Zipf (1949) Human Behavior and the Principle of Least-Effort. Addison-Wesley.

- George K. Zipf (1935) The Psychobiology of Language. Houghton-Mifflin. (see citations at http://citeseer.ist.psu.edu/context/64879/0 )

Secondary:

- Gelbukh, Alexander, and Sidorov, Grigori (2001) "Zipf and Heaps Laws’ Coefficients Depend on Language". Proc. CICLing-2001, Conference on Intelligent Text Processing and Computational Linguistics, February 18–24, 2001, Mexico City. Lecture Notes in Computer Science N 2004, ISSN 0302-9743, ISBN 3-540-41687-0, Springer-Verlag: 332–335.

- Damián H. Zanette (2006) "Zipf's law and the creation of musical context," Musicae Scientiae 10: 3-18.

- Kali R. (2003) "The city as a giant component: a random graph approach to Zipf's law," Applied Economics Letters 10: 717-720(4)

- Gabaix, Xavier (August 1999). "Zipf's Law for Cities: An Explanation". Quarterly Journal of Economics 114 (3): 739–67. doi:10.1162/003355399556133. ISSN 0033-5533. http://pages.stern.nyu.edu/~xgabaix/papers/zipf.pdf.

External links

- Steven, Strogatz (2009-05-29). "Guest Column: Math and the City". http://judson.blogs.nytimes.com/2009/05/19/math-and-the-city/. Retrieved 2009-05-29 -- An article on Zipf's law applied to city populations

- Comprehensive bibliography of Zipf's law

- Seeing Around Corners (Artificial societies turn up Zipf's law)

- PlanetMath article on Zipf's law

- Distributions de type “fractal parabolique” dans la Nature (French, with English summary)

- An analysis of income distribution

- Zipf List of French words

- Zipf list English words (extracted from www.opfine.com , jane16 engine)

- Zipf list for English, French, Spanish, Italian, Swedish, Icelandic, Latin, Portugese and Finnish from Gutenberg Project and online calculator to rank words in texts

- Citations and the Zipf-Mandelbrot's law

- Zipf's Law for U.S. Cities by Fiona Maclachlan, Wolfram Demonstrations Project.

- Weisstein, Eric W., "Zipf's Law" from MathWorld.

- Zipf's Law examples and modelling (1985)

|

||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

![f(k;N,q,s)=[\mbox{constant}]/(k+q)^s.\,](/2010-wikipedia_en_wp1-0.8_orig_2010-12/I/c80de7116cb6a160f4bca6a4dee5094a.png)

![f(k;\rho) \approx [\mbox{constant}]/k^{\rho+1}](/2010-wikipedia_en_wp1-0.8_orig_2010-12/I/ff1f9b6dd75c89477e5e5f13cda6d9a3.png)